25 Summative Assessment Examples

Chris Drew (PhD)

Dr. Chris Drew is the founder of the Helpful Professor. He holds a PhD in education and has published over 20 articles in scholarly journals. He is the former editor of the Journal of Learning Development in Higher Education. [Image Descriptor: Photo of Chris]

Learn about our Editorial Process

Summative assessment is a type of achievmeent assessment that occurs at the end of a unit of work. Its goal is to evaluate what students have learned or the skills they have developed. It is compared to a formative assessment that takes place in the middle of the unit of work for feedback to students and learners.

Performance is evaluated according to specific criteria, and usually result in a final grade or percentage achieved.

The scores of individual students are then compared to established benchmarks which can result in significant consequences for the student.

A traditional example of summative evaluation is a standardized test such as the SATs. The SATs help colleges determine which students should be admitted.

However, summative assessment doesn’t have to be in a paper-and-pencil format. Project-based learning, performance-based assessments, and authentic assessments can all be forms of summative assessment.

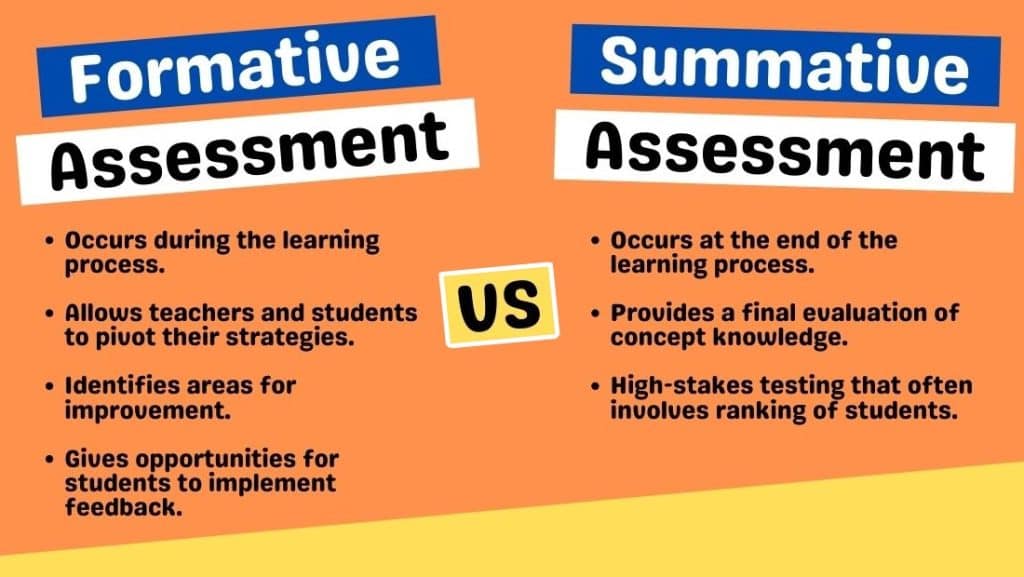

Summative vs Formative Assessment

Summative assessments are one of two main types of assessment. The other is formative assessment.

Whereas summative assessment occurs at the end of a unit of work, a formative assessment takes place in the middle of the unit so teachers and students can get feedback on progress and make accommodations to stay on track.

Summative assessments tend to be much higher-stakes because they reflect a final judgment about a student’s learning, skills, and knowledge:

“Passing bestows important benefits, such as receiving a high school diploma, a scholarship, or entry into college, and failure can affect a child’s future employment prospects and earning potential as an adult” (States et al, 2018, p. 3).

Summative Assessment Examples

Looking for real-life examples of well-known summative tests? Skip to the next section .

1. Multiple-Choice Exam

Definition: A multiple-choice exam is an assessment where students select the correct answer from several options.

Benefit: This format allows for quick and objective grading of students’ knowledge on a wide range of topics.

Limitation: It can encourage guessing and may not measure deep understanding or the ability to synthesize information.

Design Tip: Craft distractors that are plausible to better assess students’ mastery of the material.

2. Final Essay

Definition: A final essay is a comprehensive writing assessment that requires students to articulate their understanding and analysis of a topic.

Benefit: Essays assess critical thinking, reasoning, and the ability to communicate ideas in writing.

Limitation: Grading can be subjective and time-consuming, potentially leading to inconsistencies.

Design Tip: Provide clear, detailed rubrics that specify criteria for grading to ensure consistency and transparency.

3. Lab Practical Exam

Definition: A lab practical exam tests students’ ability to perform scientific experiments and apply theoretical knowledge practically.

Benefit: It directly assesses practical skills and procedural knowledge in a realistic setting.

Limitation: These exams can be resource-intensive and challenging to standardize across different settings or institutions.

Design Tip: Design scenarios that replicate real-life problems students might encounter in their field.

4. Reflective Journal

Definition: A reflective journal is an assessment where students regularly record learning experiences, personal growth, and emotional responses.

Benefit: Encourages continuous learning and self-assessment, helping students link theory with practice.

Limitation: It’s subjective and heavily dependent on students’ self-reporting and engagement levels.

Design Tip: Provide prompts to guide reflections and ensure they are focused and meaningful.

5. Open-Book Examination

Definition: An open-book examination allows students to refer to their textbooks and notes while answering questions.

Benefit: Tests students’ ability to locate and apply information rather than memorize facts.

Limitation: It may not accurately gauge memorization or the ability to quickly recall information.

Design Tip: Focus questions on problem-solving and application to prevent students from merely copying information.

6. Group Presentation

Definition: A group presentation is an assessment where students collaboratively prepare and deliver a presentation on a given topic.

Benefit: Enhances teamwork skills and the ability to communicate ideas publicly.

Limitation: Individual contributions can be uneven, making it difficult to assess students individually.

Design Tip: Clearly define roles and expectations for all group members to ensure fair participation.

7. Poster Presentation

Definition: A poster presentation requires students to summarize their research or project findings on a poster and often defend their work in a public setting.

Benefit: Develops skills in summarizing complex information and public speaking.

Limitation: Space limitations may restrict the amount of information that can be presented.

Design Tip: Encourage the use of clear visual aids and a logical layout to effectively communicate key points.

8. Infographic

Definition: An infographic is a visual representation of information, data, or knowledge intended to present information quickly and clearly.

Benefit: Helps develop skills in designing effective and attractive presentations of complex data.

Limitation: Over-simplification might lead to misinterpretation or omission of critical nuances.

Design Tip: Teach principles of visual design and data integrity to enhance the educational value of infographics.

9. Portfolio Assessment

Definition: Portfolio assessment involves collecting a student’s work over time, demonstrating learning, progress, and achievement.

Benefit: Provides a comprehensive view of a student’s abilities and improvements over time.

Limitation: Can be logistically challenging to manage and time-consuming to assess thoroughly.

Design Tip: Use clear guidelines and checklists to help students know what to include and ensure consistency in assessment.

10. Project-Based Assessment

Definition: Project-based assessment evaluates students’ abilities to apply knowledge to real-world challenges through extended projects.

Benefit: Encourages practical application of skills and fosters problem-solving and critical thinking.

Limitation: Time-intensive and may require significant resources to implement effectively.

Design Tip: Align projects with real-world problems relevant to the students’ future careers to increase engagement and applicability.

11. Oral Exams

Definition: Oral exams involve students answering questions spoken by an examiner to assess their knowledge and thinking skills.

Benefit: Allows immediate clarification of answers and assessment of communication skills.

Limitation: Can be stressful for students and result in performance anxiety, affecting their scores.

Design Tip: Create a supportive environment and clear guidelines to help reduce anxiety and improve performance.

12. Capstone Project

Definition: A capstone project is a multifaceted assignment that serves as a culminating academic and intellectual experience for students.

Benefit: Integrates knowledge and skills from various areas, fostering holistic learning and innovation.

Limitation: Requires extensive time and resources to supervise and assess effectively.

Design Tip: Ensure clear objectives and support structures are in place to guide students through complex projects.

Real-Life Summative Assessments

- Final Exams for a College Course: At the end of the semester at university, there is usually a final exam that will determine if you pass. There are also often formative tests mid-way through the course (known in England as ICAs and the USA as midterms).

- SATs: The SATs are the primary United States college admissions tests. They are a summative assessment because they provide a final grade that can determine whether a student gets into college or not.

- AP Exams: The AP Exams take place at the end of Advanced Placement courses to also determine college readiness.

- Piano Exams: The ABRSM administers piano exams to test if a student can move up a grade (from grades 1 to 8), which demonstrates their achievements in piano proficiency.

- Sporting Competitions: A sporting competition such as a swimming race is summative because it leads to a result or ranking that cannot be reneged. However, as there will always be future competitions, they could also be treated as summative – especially if it’s not the ultimate competition in any given sport.

- Drivers License Test: A drivers license test is pass-fail, and represents the culmination of practice in driving skills.

- IELTS: Language tests like IELTS are summative assessments of a person’s ability to speak a language (in the case of IELTS, it’s English).

- Citizenship Test: Citizenship tests are pass-fail, and often high-stakes. There is no room for formative assessment here.

- Dissertation Submission: A final dissertation submission for a postgraduate degree is often sent to an external reviewer who will give it a pass-fail grade.

- CPR Course: Trainees in a 2-day first-aid and CPR course have to perform on a dummy while being observed by a licensed trainer.

- PISA Tests: The PISA test is a standardized test commissioned by the OECD to provide a final score of students’ mathematic, science, and reading literacy across the world, which leads to a league table of nations.

- The MCATs: The MCATs are tests that students conduct to see whether they can get into medical school. They require significant study and preparation before test day.

- The Bar: The Bar exam is an exam prospective lawyers must sit in order to be accepted as lawyers in their jurisdiction.

Summative assessment allows teachers to determine if their students have reached the defined behavioral objectives . It can occur at the end of a unit, an academic term, or academic year.

The assessment usually results in a grade or a percentage that is recorded in the student’s file. These scores are then used in a variety of ways and are meant to provide a snapshot of the student’s progress.

Although the SAT or ACT are common examples of summative assessment, it can actually take many forms. Teachers might ask their students to give an oral presentation, perform a short role-play, or complete a project-based assignment.

Brookhart, S. M. (2004). Assessment theory for college classrooms. New Directions for Teaching and Learning , 100 , 5-14. https://doi.org/10.1002/tl.165

Dixon, D. D., & Worrell, F. C. (2016). Formative and summative assessment in the classroom. Theory into Practice , 55 , 153-159. https://doi.org/10.1080/00405841.2016.1148989

Geiser, S., & Santelices, M. V. (2007). Validity of high-school grades in predicting student success beyond the freshman year: High-school record vs. standardized tests as indicators of four-year college outcomes. Research and Occasional Paper Series. Berkeley, CA: Center for Studies in Higher Education, University of California.

Kibble J. D. (2017). Best practices in summative assessment. Advances in Physiology Education , 41 (1), 110–119. https://doi.org/10.1152/advan.00116.2016

Lungu, S., Matafwali, B., & Banja, M. K. (2021). Formative and summative assessment practices by teachers in early childhood education centres in Lusaka, Zambia. European Journal of Education Studies, 8 (2), 44-65.

States, J., Detrich, R., & Keyworth, R. (2018). Summative Assessment (Wing Institute Original Paper). https://doi.org/10.13140/RG.2.2.16788.19844

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd-2/ 10 Reasons you’re Perpetually Single

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd-2/ 20 Montessori Toddler Bedrooms (Design Inspiration)

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd-2/ 21 Montessori Homeschool Setups

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd-2/ 101 Hidden Talents Examples

Leave a Comment Cancel Reply

Your email address will not be published. Required fields are marked *

Nursing Course and Curriculum Development – University of Brighton

Innovative – inspirational – inclusive.

Summative assessment

Summative assessment i.e. assessment of learning

Summative assessment enables students to demonstrate the extent of their learning which will contribute to their overall degree classification.

The module specification must state (in the ‘Assessment tasks’ section) :

- The details of the assessment for the module

- The minimum pass mark

- The type of assessment task and weighting

- In each academic level at least 1 module will need to offer flexibility with an alternative assessment task to support inclusive practice

Examples of summative assessments (and alternatives) :

- Oral presentation (Poster presentation, Webinar, Podcast)

- Leaflet (webpage)

- Written reflection (Blog, Vlog)

- Clinical Link Learning Activities (open skill)

- Practical exam (OSCE, VIVA)

- Written exam (open book)

- Group task e.g. students work in a group to write a 1000 word essay – social interaction increases academic writing skills and positive social support

Whilst a variety of assessment types can help students who have different strengths, it is important that assessment tasks are repeated to enable feed forward.

Aim to have clinically relevant assessment tasks following the nursing model – nursing assessment / plan / implement / evaluate e.g

- assess the needs of a person and family affected by

- plan a relevant care package or approach to care which could be carried out in your area

- create a question / problem that replicates the real-life context as closely as possible

- compare different theories in same situation

- see Clinical Link Learning Activities for more examples

Parameters for a 20 credit module (equivalent to 35 hours student effort):

- 2500 – 3500 word essay

- 2.5 – 3 hour written exam

- 20 – 25 minute presentation

- 5 – 6 minute video production

For modules with more than 1 assessment task the output is proportionate to the weighting e.g. 50% weighting 10 minute presentation and 1500 word essay.

Moderation :

Every marker marks the same submission and discusses feedback and mark to agree the standard for marking all remaining scripts – this will support consistency.

There is a risk that latent criteria are applied to the marking of assessments e.g. academic writing style or other content not part of the learning outcomes. It is therefore really important that what markers are expecting to assess aligns with the learning outcomes and the assessment task.

We use cookies to personalise content, to provide social media features and to analyse our traffic. Read our detailed cookie policy

An official website of the United States government

Official websites use .gov A .gov website belongs to an official government organization in the United States.

Secure .gov websites use HTTPS A lock ( Lock Locked padlock icon ) or https:// means you've safely connected to the .gov website. Share sensitive information only on official, secure websites.

- Publications

- Account settings

- Advanced Search

- Journal List

Simulation-based summative assessment in healthcare: an overview of key principles for practice

Clément buléon, laurent mattatia, rebecca d minehart, jenny w rudolph, fernande j lois, erwan guillouet, anne-laure philippon, olivier brissaud, antoine lefevre-scelles, dan benhamou, françois lecomte, the sofrasims assessment with simulation group, anne bellot, isabelle crublé, guillaume philippot, thierry vanderlinden, sébastien batrancourt, claire boithias-guerot, jean bréaud, philine de vries, louis sibert, thierry sécheresse, virginie boulant, louis delamarre, laurent grillet, marianne jund, christophe mathurin, jacques berthod, blaise debien, olivier gacia, guillaume der sahakian, sylvain boet, denis oriot, jean-michel chabot.

- Author information

- Article notes

- Copyright and License information

Corresponding author.

Received 2022 Mar 2; Accepted 2022 Nov 30; Collection date 2022.

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/ . The Creative Commons Public Domain Dedication waiver ( http://creativecommons.org/publicdomain/zero/1.0/ ) applies to the data made available in this article, unless otherwise stated in a credit line to the data.

Healthcare curricula need summative assessments relevant to and representative of clinical situations to best select and train learners. Simulation provides multiple benefits with a growing literature base proving its utility for training in a formative context. Advancing to the next step, “the use of simulation for summative assessment” requires rigorous and evidence-based development because any summative assessment is high stakes for participants, trainers, and programs. The first step of this process is to identify the baseline from which we can start.

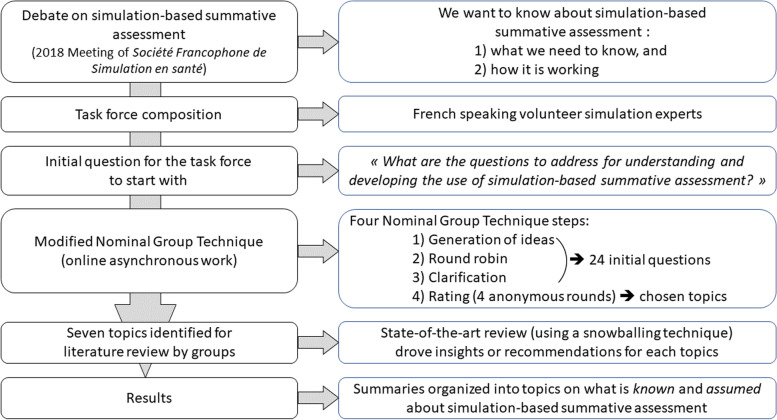

First, using a modified nominal group technique, a task force of 34 panelists defined topics to clarify the why, how, what, when, and who for using simulation-based summative assessment (SBSA). Second, each topic was explored by a group of panelists based on state-of-the-art literature reviews technique with a snowball method to identify further references. Our goal was to identify current knowledge and potential recommendations for future directions. Results were cross-checked among groups and reviewed by an independent expert committee.

Seven topics were selected by the task force: “What can be assessed in simulation?”, “Assessment tools for SBSA”, “Consequences of undergoing the SBSA process”, “Scenarios for SBSA”, “Debriefing, video, and research for SBSA”, “Trainers for SBSA”, and “Implementation of SBSA in healthcare”. Together, these seven explorations provide an overview of what is known and can be done with relative certainty, and what is unknown and probably needs further investigation. Based on this work, we highlighted the trustworthiness of different summative assessment-related conclusions, the remaining important problems and questions, and their consequences for participants and institutions of how SBSA is conducted.

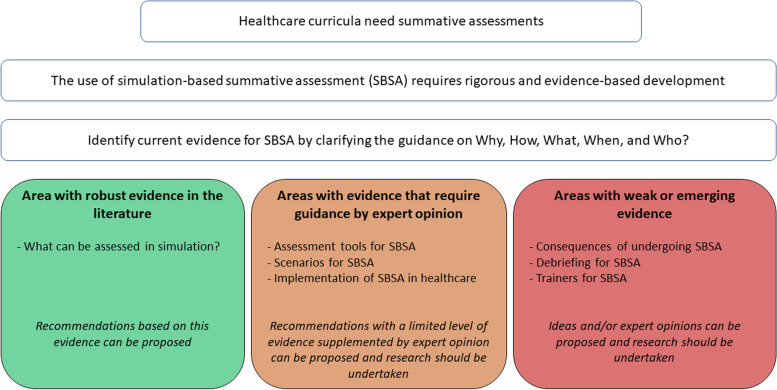

Our results identified among the seven topics one area with robust evidence in the literature (“What can be assessed in simulation?”), three areas with evidence that require guidance by expert opinion (“Assessment tools for SBSA”, “Scenarios for SBSA”, “Implementation of SBSA in healthcare”), and three areas with weak or emerging evidence (“Consequences of undergoing the SBSA process”, “Debriefing for SBSA”, “Trainers for SBSA”). Using SBSA holds much promise, with increasing demand for this application. Due to the important stakes involved, it must be rigorously conducted and supervised. Guidelines for good practice should be formalized to help with conduct and implementation. We believe this baseline can direct future investigation and the development of guidelines.

Keywords: Medical education, Summative, Assessment, Simulation, Education, Competency-based education

There is a critical need for summative assessment in healthcare education [ 1 ]. Summative assessment is high stakes, both for graduation certification and for recertification in continuing medical education [ 2 – 5 ]. Knowing the consequences, the decision to validate or not validate the competencies must be reliable, based on rigorous processes, and supported by data [ 6 ]. Current methods of summative assessment such as written or oral exams are imperfect and need to be improved to better benefit programs, learners, and ultimately patients [ 7 ]. A good summative assessment should sufficiently reflect clinical practice to provide a meaningful assessment of competencies [ 1 , 8 ]. While some could argue that oral exams are a form of verbal simulation, hands-on simulation can be seen as a solution to complement current summative assessments and enhance their accuracy by bringing these tools closer to assessing the necessary competencies [ 1 , 2 ].

Simulation is now well established in the healthcare curriculum as part of a modern, comprehensive approach to medical education (e.g., competency-based medical education) [ 9 – 11 ]. Rich in various modalities, simulation provides training in a wide range of technical and non-technical skills across all disciplines. Simulation adds value to the educational training process particularly with feedback and formative assessment [ 9 ]. With the widespread use of simulation in the formative setting, the next logical step is using simulation for summative assessment.

The shift from formative to summative assessment using simulation in healthcare must be thoughtful, evidence-based, and rigorous. Program directors and educators may find it challenging to move from formative to summative use of simulation. There are currently limited experiences (e.g., OSCE [ 12 , 13 ]) but not established guidelines on how to proceed. The evidence needed for the feasibility, the validity, and the definition of the requirement for simulation-based summative assessment (SBSA) in healthcare education has not yet been formally gathered. With this evidence, we can hope to build a rigorous and fair pathway to SBSA.

The purpose of this work is to review current knowledge for SBSA by clarifying the guidance on why, how, what, when, and who. We aim at identifying areas (i) with robust evidence in the literature, (ii) with evidence that requires guidance by expert opinion, and (iii) with weak or emerging evidence. This may serve as a basis for future research and guideline development for the safe and effective use of SBSA (Fig. 1 ).

Study question and topic level of evidence

First, we performed a modified Nominal Group Technique (NGT) to define the further questions to be explored in order to have the most comprehensive understanding of SBSA. We followed recommendations on NGT for conducting and reporting this research [ 14 ]. Second, we conducted state-of-the-art literature reviews to assess the current knowledge on the questions/topics identified by the modified NGT. This work did not require Institutional Review Board involvement.

A discussion on the use of SBSA was led by executive committee members of the Société Francophone de Simulation en Santé (SoFraSimS) in a plenary session and involved congress participants in May 2018 at the SoFraSimS annual meeting in Strasbourg, France. Key points addressed during this meeting were the growing interest in using SBSA, its informal uses, and its inclusion in some formal healthcare curricula. The discussion identified that these important topics lacked current guidelines. To reduce knowledge gaps, the SoFraSimS executive committee assigned one of its members (FL, one of the authors) to lead and act as a NGT facilitator for a task force on SBSA. The task force’s mission was to map the current landscape of SBSA, the current knowledge and gaps; and potentially to identify experts’ recommendations.

Task force characteristics

The task force panelists were recruited among volunteer simulation healthcare trainers in French-speaking countries after a call for application by SoFraSimS in May 2019. Recruiting criteria were a minimum of 5 years of experience in simulation and a direct involvement in simulation programs development or currently running. There were 34 (12 women and 22 men) from 3 countries (Belgium, France, Switzerland) included. Twenty-three were physicians and 11 were nurses, while 12 total had academic positions. All were experienced trainers in simulation for more than 7 years and were involved or responsible for initial training or continuing education programs with simulation. The task force leader (FL) was responsible for recruiting panelists, organizing, and coordinating the modified NGT, synthesizing responses, and writing the final report. A facilitator (CB) assisted the task force leader with the modified NGT, the synthesis of responses, and the writing of the final report. Both NGT facilitators (FL and CB) had more than 14 years of experience in simulation, had experience in research in simulation, and were responsive to simulation programs development and running.

First part: initial question and modified nominal group technique (NGT)

To answer the challenging question of “What do we need to know for a safe and effective SBSA practice?”, following the French Haute Autorité de Santé guidelines [ 15 ], we applied a modified nominal group technique (NGT) approach [ 16 ] between September and October 2019. The goal of our modified NGT was to define the further questions to be explored to have the most comprehensive understanding of the current SBSA (Fig. 2 ). The modifications to NGT included interactions that were not in-person and were asynchronous for some. Those modifications were introduced as a result of the geographic dispersion of the panelists across multiple countries and the context of the COVID-19 pandemic.

Study flowchart

The first two steps of the NGT (generation of ideas and round robin) facilitated by the task force leader (FL) were conducted online simultaneously and asynchronously via email exchanges and online surveys over a 6-week period. For the initiation of the first step (generation of ideas), the task force leader (FL) sent an initial non-exhaustive literature review of 95 articles and proposed the initial following items for reflection: definition of assessment, educational principles of simulation, place of summative assessment and its implementation, assessment of technical and non-technical skills in initial training, continuing education, and interprofessional training. The task force leader (FL) asked the panelists to formulate topics or questions to propose for exploration in Part 2 based on their knowledge and the literature provided Panelists independently elaborated proposals and sent them back to the task force leader (FL) who regularly synthesized them and sent the status of the questions/topics to the whole task force while preserving the anonymity of the contributors and asking them to check the accuracy of the synthesized elements (second step, as a “round robin”).

The third step of the NGT (clarification) was carried out during a 2-h video conference session. All panelists were able to discuss the proposed ideas, group the ideas into topics, and make the necessary clarifications. As a result of this step, 24 preliminary questions were defined for the fourth step (Supplemental Digital Content 1).

The fourth step of the NGT (vote) consisted of four distinct asynchronous and anonymous online vote rounds that led to a final set of topics with related sub-questions (Supplemental Digital content 2). Panelists were asked to vote to regroup, separate, keep, or discard questions/topics. All vote rounds followed similar validation rules. We [NGT facilitators (FL and CB)] kept items (either questions or topics) with more than 70% approval ratings by panelists. We reworded and resubmitted in the next round all items with 30–70% approval. We discarded items with less than 30% approval. The task force discussed discrepancies and achieved final ratings with a complete agreement for all items. For each round, we sent reminders to reach a minimum participation rate of 80% of the panelists. Then, we split the task force into 7 groups, one for each of the 7 topics defined at the end of the vote (step 4).

Second part: literature review

From November 2019 to October 2020, the groups each identified existing literature containing the current knowledge, and potential recommendations on the topic they were to address. This identification was done based on a non-systematic review of the existing literature. To identify existing literature, the groups conducted state-of-the-art reviews [ 17 ] and expanded their reviews with a snowballing literature review technique [ 18 ] based on the articles’ references. The selected literature search performed by each group was inserted into the task force's common library on SBSA in healthcare as it was conducted.

For references, we searched electronic databases (MEDLINE), gray literature databases (including digital theses), simulation societies and centers’ websites, generic web searches (e.g., Google Scholar), and reference lists from articles. We selected publications related to simulation in healthcare with keywords “summative assessment,” “summative evaluation,” and also specific keywords related to topics. The search was iterative to seek all available data until saturation was achieved. Ninety-five references were initially provided to the task force by the NGT facilitator leader (FL). At the end of the work, the task force common library contained a total of 261 references.

Techniques to enhance trustworthiness from primary reports to the final report

The groups’ primary reports were reviewed and critiqued by other groups. After group cross-reviewing, primary reports were compiled by NGT facilitators (FL and CB) in a single report. This report, responding to the 7 topics, was drafted in December 2020 and submitted as a single report to an external review committee composed of 4 senior experts in education, training, and research from 3 countries (Belgium, Canada, France) with at least 15 years of experience in simulation. NGT facilitators (FL and CB) responded directly to reviewers when possible and sought assistance from the groups when necessary. The final version of the report was approved by the SoFraSimS executive committee in January 2021.

First part: modified nominal group technique (NGT)

The first two steps of the NGT by their nature (generation of ideas and “round robin”) did not provide results. The third step (clarification phase), identified 24 preliminary questions (Supplemental digital content 1) to be submitted to the fourth step (vote). The 4 rounds of voting (step 4) resulted in 7 topics with sub-questions (Supplemental Digital content 2): (1) “What can be assessed in simulation?” (2) “Assessment tools for SBSA,” (3) “Consequences of undergoing the SBSA process,” (4) “Simulation scenarios for SBSA,” (5) “Debriefing, video, research and SBSA strategies,” (6) Trainers for SBSA,” (7) “Implementation of SBSA in healthcare”. These 7 topics and their sub-questions were the starting point for the state-of-the-art literature reviews of each group for the second part.

For each of the 7 topics, the groups highlighted what appears to be validated in the literature, the remaining important problems and questions, and their consequences for participants and institutions of how SBSA is conducted. Results in this section present the major ideas and principles from the literature review, including their nuances where necessary.

What can be assessed in simulation?

Healthcare faculty and institutions must ensure that each graduate is practice ready. Readiness to practice implies mastering certain competencies, which is dependent on learning them appropriately. The competency approach involves explicit definitions of the acquired core competencies necessary to be a “good professional.” Professional competency could be defined as the ability of a professional to use judgment, knowledge, skills, and attitudes associated with their profession to solve complex problems [ 19 – 21 ]. Competency is a complex “knowing how to act” based on the effective mobilization and combination of a variety of internal and external resources in a range of situations [ 19 ]. Competency is not directly observable; it is the performance in a situation that can be observed [ 19 ]. Performance can vary depending on human factors such as stress, fatigue, etc.… During simulation, competencies can be assessed by observing “key” actions using assessment tools [ 22 ]. Simulation’s limitations must consider when defining the assessable competencies. Not all simulation methods are equivalent to assessing specific competencies [ 22 ].

Most healthcare competencies can be assessed with simulation, throughout at curriculum, if certain conditions are met. First, the competency being assessed summatively must have already been assessed formatively with simulation [ 23 , 24 ]. Second, validated assessment tools must be available to conduct this summative assessment [ 25 , 26 ]. These tools must be reliable, objective, reproducible, acceptable, and practical [ 27 – 30 ]. The small number of currently validated tools limits the use of simulation for competency certification [ 31 ]. Third, it is not necessary or desirable to certify all competencies [ 32 ]. The situations chosen must be sufficiently frequent in the student’s future professional practice (or potentially impactful for the patient) and must be hard or impossible to assess and validate in other circumstances (e.g., clinical internships) [ 2 ]. Fourth, simulation can be used for certification throughout the curriculum [ 33 – 35 ]. Finally, limitations for the use of simulation throughout the curriculum may be a lack of logistical resources [ 36 ]. Based on our findings in the literature, we have summarized in Table 1 the educational consideration when implementing a SBSA.

Considerations for implementing a summative assessment with simulation

Assessment tools for simulation-based summative assessment

One of the challenges of assessing competency lies in the quality of the measurement tools [ 31 ]. A tool that allows the raters to collect data must also allow them to give meaning to their assessment, while securing that it is really measuring what it aims to [ 25 , 37 ]. A tool must be valid and, capable of measuring the assessed competency with fidelity and, reliability while providing reproducible data [ 38 ]. Since a competency is not directly measurable, it will be analyzed on the basis of learning expectations, the most “concrete” and observable form of a competency [ 19 ]. Several authors have described definitions of the concept of validity and the steps to achieve it [ 38 – 41 ]. Despite different validation approaches, the objectives are similar: to ensure that the tool is valid, the scoring items reflect the assessed competency, and the contents are appropriated for the targeted learners and raters [ 20 , 39 , 42 , 43 ]. A tool should have psychometric characteristics that allow users to be confident of its reproducibility, discriminatory nature, reliability, and external consistency [ 44 ]. A way to ensure that a tool has acceptable validity is to compare it to existing and validated tools that assess the same skills for the same learners. Finally, it is important to consider the consequences of the test to determine whether it best discriminates competent students from others [ 38 , 43 ].

Like a diagnostic score, a relevant assessment tool must be specific [ 30 , 39 , 41 ]. It is not good or bad, but valid through a rigorous validation process [ 39 , 41 , 42 ]. This validation process determines whether the tool measures what it is supposed to measure and whether this measurement is reproducible at different times (test–retest) or with 2 observers simultaneously. It also determines if the tool results are correlated with another measure of the same ability or competency and if the consequences of the tool results are related to the learners’ actual competency [ 38 ].

Following Messick’s framework, which aimed to gather different sources of validity in one global concept (unified validity), Downing describes five sources of validity, which must be assessed with the validation process [ 38 , 45 , 46 ]. Table 2 presents an illustration of the development used in SBSA according to the unified validity framework for a technical task [ 38 , 45 , 46 ]. An alternative framework using three sources of validity for teamwork’s non-technical skills are presented in Table 3 .

Example of the development of a tool to assess technical skill achievement in a simulated situation, based on work by Oriot et al., Downing, and Messick’s framework [ 38 , 46 , 47 ]

Example of the development of an assessment tool for the observation of teamwork in simulation [ 48 ]

A tool is validated in a language. Theoretically, this tool can only be used in this language, given the nuances present with interpretation [ 49 ]. In certain circumstances, a “translated” tool, but not a “translated and validated in a specific language” tool, can lead to semantic biases that can affect the meaning of the content and its representation [ 49 – 55 ]. For each assessment sequence, validity criteria consist of using different tools in different assessment situations and integrating them into a comprehensive program which considers all aspects of competency. The rating made with a validated tool for one situation must be combined with other assessment situations, since there is no “ideal” tool [ 28 , 56 ] A given tool can be used with different professions or with participants at different levels of expertise or in different languages if it is validated for these situations [ 57 , 58 ]. In a summative context, a tool must have demonstrated a high-level of validity to be used because of the high stake for the participants [ 56 ]. Finally, the use or creation of an assessment tool requires trainers to question its various aspects, from how it was created to its reproducibility and the meaning of the results generated [ 59 , 60 ].

Two types of assessment tools should be distinguished: tools that can be adapted to different situations and tools that are specific to a situation [ 61 ]. Thus, technical skills may have a dedicated assessment tool (e.g., intraosseous) [ 47 ] or an assessment grid generated from a list of pre-established and validated items (e.g., TAPAS scale) [ 62 ]. Non-technical skills can be observed using scales that are not situation-specific (e.g., ANTS, NOTECHS) [ 63 , 64 ] or that are situation-specific (e.g., TEAM scale for resuscitation) [ 57 , 65 ]. Assessment tools should be provided to participants and should be included in the scenario framework, at least as a reference [ 66 – 69 ]. In the summative assessment of a procedure, structured assessment tools should probably be used, using a structured objective assessment form for technical skills [ 70 ]. The use of a scale, in the context of the assessment of a technical gesture, seems essential. As with other tools, any scale must be validated beforehand [ 47 , 70 – 72 ].

Consequences of undergoing the simulation-based summative assessment process

Summative assessment has two notable consequences on learning strategies. First, it may drive the learner’s behavior during the assessment, while it is essential to assess the competencies targeted, not the ability of the participant to adapt to the assessment tool [ 6 ]. Second, the pedagogy key concept of “pedagogical alignment” must be respected [ 23 , 73 ]. It means that assessment methods must be coherent with the pedagogical activities and objectives. For this to happen, participants must have formative simulation training focusing on the assessed competencies prior to the SBSA [ 24 ].

Participants have been reported as experiencing commonly mild (e.g., appearing slightly upset, distracted, teary-eyed, quiet, or resistant to participating in the debriefing) or moderate (e.g., crying, making loud, and frustrated comments) psychological events in the simulation [ 74 ]. While voluntary recruitment for formative simulation is commonplace, all students are required to take summative assessments in training. This required participation in high-stake assessment may have a more consequential psychological impact [ 26 , 75 ]. This impact can be modulated by training and assessment conditions [ 75 ]. First, the repetition of formative simulations reduces the psychological impact of SBSA on participants [ 76 ]. Second, the transparency on the objectives and methods of assessment limits detrimental psychological impact [ 77 , 78 ]. Finally, detrimental psychological impacts are increased by abnormally high physiological or emotional stress such as fatigue, and stressful events in the 36 h preceding the assessment, such that students with a history of post-traumatic stress disorder or psychological disorder may be strongly and negatively impacted by the simulation [ 76 , 79 – 81 ].

It is necessary to optimize SBSA implementation to limit its pedagogical and psychological negative impacts. Ideally, during the summative assessment, it has been proposed to take into account the formative assessment that has already been carried out [ 1 , 20 , 21 ]. Similarly in continuing education, the professional context of the person assessed should be considered. In the event of failure, it will be necessary to ensure sympathetic feedback and to propose a new assessment if necessary [ 21 ].

Scenarios for simulation-based summative assessment

Some authors argue that there are differences between summative and formative assessment scenarios [ 76 , 79 – 81 ]. The development of a SBSA scenario begins with the choice of a theme, which is most often agreed upon by experts at the local level [ 66 ]. The themes are most often chosen based on the participants’ competencies to be assessed and included in the competencies requirement for the initial [ 82 ] and continuing education [ 35 , 83 ]. A literature review even suggests the need to choose themes covering all the competences to be assessed [ 41 ]. These choices of themes and objectives also depend on the simulation tools technically available: “The themes were chosen if and only if the simulation tools were capable of reproducing “a realistic simulation” of the case.” [ 84 ].

The main quality criterion for SBSA is that the cases selected and developed are guided by the assessment objectives [ 85 ]. It is necessary to be clear about the assessment objectives of each scenario to select the right assessment tool [ 86 ]. Scenarios should meet four main principles: predictability, programmability, standardizability, and reproducibility [ 25 ]. Scenario writing should include a specific script, cues, timing, and events to practice and assess the targeted competencies [ 87 ]. The implementation of variable scenarios remains a challenge [ 88 ]. Indeed, most authors develop only one scenario per topic and skill to be assessed [ 85 ]. There are no recommendations for setting a predictable duration for a scenario [ 89 ]. Based on our findings we suggest some key elements for structuring a SBSA scenario in Table 4 . For technical skill assessment, scenarios will be short and the assessment is based on an analytical score [ 82 , 89 ]. For non-technical skill assessment, scenarios will be longer and the assessment based on analytical and holistic scores [ 82 , 89 ].

Key element structuring a summative assessment scenario

Debriefing, video, and research for simulation-based summative assessment

Studies have shown that debriefings are essential in formative assessment [ 90 , 91 ]. No such studies are available for summative assessment. Good practice requires debriefing in both formative and summative simulation-based assessments [ 92 , 93 ]. In SBSA, debriefing is often brief feedback given at the end of the simulation session, in groups [ 85 , 94 , 95 ], or individually [ 83 ]. Debriefing can also be done later with a trainer and help of video, or via written reports [ 96 ]. These debriefings make it possible to assess clinical skills for summative assessment purposes [ 97 ]. Some tools have been developed to facilitate this assessment of clinical reasoning [ 97 ].

Video can be used for four purposes: session preparation, simulation improvement, debriefing, and rating (Table 5 ) [ 95 , 98 ]. In SBSA sessions, video can be used during the prebriefing to provide participants with standardized and reproducible information [ 99 ]. A video can increase the realism of the situation during the simulation with ultrasound loops and laparoscopy footage. Simulation records can be reviewed either for debriefing or rating purposes [ 34 , 71 , 100 , 101 ]. A video is very useful for the training raters (e.g., for calibration and recalibration) [ 102 ]. It enables raters to rate the participants’ performance offline and to have an external review if necessary [ 34 , 71 , 101 ]. Despite the technical difficulties to be considered [ 42 , 103 ], it can be expected that video-based automated scoring assistance will facilitate assessments in the future.

Uses of video for simulation-based formative and summative assessment

The constraints associated with the use of video rely on the participants’ agreement, the compliance with local rules, and that the structure in charge of the assessment with video secures the protection of the rights of individuals and data safety, both at a national and at the higher (e.g., European GDPR) level [ 104 , 105 ].

In Table 5 , we list the main uses of video during simulation sessions found in the literature.

Research in SBSA can focus, as in formative assessment, on the optimization of simulation processes (programs, structures, human resources). Research can also explore the development and validation of summative assessment tools, the automation and assistance of assessment resources, and the pedagogical and clinical consequences of SBSA.

Trainers for simulation-based summative assessment

Trainers for SBSA probably need specific skills because of the high number of potential errors or biases in SBSA, despite the quest for objectivity (Table 6 ) [ 106 ]. The difficulty in ensuring objectivity is likely the reason why the use of self or peer assessment in the context of SBSA is not well documented and the literature does not yet support it [ 59 , 60 , 107 , 108 ].

Potential errors, effects, and bias in simulation-based summative assessment [ 109 , 110 ]

SBSA requires the development of specific scenarios, staged in a reproducible way, and the mastery of assessment tools to avoid assessment bias [ 111 – 114 ]. Fulfilling those requirements calls for specific abilities to fit with the different roles of the trainer. These different roles of trainers would require specific initial and ongoing training tailored to their tasks [ 111 , 113 ]. In the future, concepts of the roles and tasks of these trainers should be integrated into any “training of trainers” in simulation.

Implementation of simulation-based summative assessment in healthcare

The use of SBSA has been described by Harden in 1975 with Objective and Structured Clinical Examination (OSCE) tests for medical students [ 115 ]. The summative use of simulation has been introduced in different ways depending on the professional field and the country [ 116 ]. There is more literature on certification at the undergraduate and graduate levels than on recertification at the postgraduate level. The use of SBSA in re-certification is currently more limited [ 83 , 117 ]. Participation is often mandated, and it does not provide a formal assessment of competency [ 83 ]. Some countries are defining processes for the maintenance of certification in which simulation is likely to play a role (e.g., in the USA [ 118 ] and France [ 116 ]). Recommendations regarding the development of SBSA for OSCE were issued by the AMEE (Association for Medical Education in Europe) in 2013 [ 12 , 119 ]. Combined with other recommendations that address the organization of examinations on other immersive simulation modalities, in particular, full-scale sessions using complex mannequins [ 22 , 85 ], they give us a solid foundation for the implementation of SBSA.

The overall process to ensure a high-quality examination by simulation is therefore defined but particularly demanding. It mobilizes many material and human resources (administrative staff, trainers, standardized patients, and healthcare professionals) and requires a long development time (several months to years depending on the stakes) [ 36 ]. We believe that the steps to overcome during the implementation of SBSA range from setting up a coordination team, to supervising the writers, the raters, and the standardized patients, as well as taking into account the logistical and practical pitfalls.

The development of a competency framework valid for an entire curriculum (e.g., medical studies) satisfies a fundamental need [ 7 , 120 ]. This development allows identifying competencies to be assessed with simulation, those to be assessed by other methods, and those requiring triangulation by several assessment methods. This identification then guides scenarios’ writing and examination’s development with good content validity. Scenarios and examinations will form a bank of reproducible assessment exercises. The examination quality process, including psychometric analyses, is part of the development process from the beginning [ 85 ].

We have summarized in Table 7 the different steps in the implementation of SBSA.

Implementation of simulation-based summative assessment step by step

Recertification

Recertification programs for various healthcare domains are currently being implemented or planned in many countries (e.g., in the USA [ 118 ] and France [ 116 ]). This is a continuation of the movement to promote the maintenance of competencies. Examples can be cited in France with the creation of an agency for continuing professional development or in the USA with the Maintenance Of Certification [ 83 , 126 ]. The certification of health care facilities and even teams is also being studied [ 116 ]. Simulation is regularly integrated into these processes (e.g., in the USA [ 118 ] and France [ 116 ]). Although we found some commonalities basis between the certification and recertification processes, there are many differences (Table 8 ).

Commonalities and discrepancies between certification and recertification

Currently, when simulation-based training is mandatory (e.g., within the American Board of Anesthesiology’s “Maintenance Of Certification in Anesthesiology,” or MOCA 2.0® in the US), it is most often a formative process [ 34 , 83 ]. SBSA has a place in the recertification process, but there are many pitfalls to avoid. In the short term, we believe that it will be easier to incorporate formative sessions as a first step. The current consensus seems to be that there should be no pass/fail recertification simulation without personalized global professional support, but which is not limited to a binary aptitude/inaptitude approach [ 21 , 116 ].

Many important issues and questions remain regarding the field of SBSA. This discussion will return to our identified 7 topics and highlight these points, their implications for the future, and some possible leads for future research and guidelines development for the safe and effective use of this tool in SBSA.

SBSA is currently mainly used in initial training in uni-professional and individual settings via standardized patients or task trainers (OSCE) [ 12 , 13 ]. In the future, SBSA will also be used in continuing education for professionals who will be assessed throughout their career (re-certification) as well as in interprofessional settings [ 83 ]. When certifying competencies, it is important to keep in mind the differences between the desired competencies and the observed performances [ 128 ]. Indeed, it must be that “what is a competency” is specifically defined [ 6 , 19 , 21 ]. Competencies are what we wish to evaluate during the summative assessment to validate or revalidate a professional for his/her practice. Performance is what can be observed during an assessment [ 20 , 21 ]. In this context, we consider three unresolved issues. The first issue is that an assessment only gives access to a performance at a given moment (“Performance is a snapshot of a competency”), whereas one would like to assess a competency more generally [ 128 ]. The second issue is: How does an observed performance—especially in simulation—reveal a real competency in real life? [ 129 ] In other words, does the success or failure of a single SBSA really reflect actual real-life competency? [ 130 ] The third issue is the assessment of a team performance/competency [ 131 – 133 ]. Until now, SBSA has come from the academic field and has been an individual assessment (e.g., OSCE). Future SBSA could involve teams, driven by governing bodies, institutions, or insurances [ 134 , 135 ]. The competency of a team is not the sum of the competencies of the individuals who compose it. How can we proceed to assess teams as a specific entity, both composed of individuals and independent of them? To make progress in answering these three issues, we believe it is probably necessary to consider the approximation between observed and assessed performance and competency as acceptable, but only by specifying the scope of validity. Research in these areas is needed to define it and answer these questions.

The consequence of undergoing SBSA has focused on the psychological aspect and have set aside the more usual consequences such as achieving (or not) the minimum passing score. Future research should embrace more global SBSA consequence field, including how reliable SBSA is at determining how someone is competent.

Rigor and method in the development and selection of assessment tools are paramount to the quality of the summative assessment [ 136 ]. The literature shows that is necessary that assessment tools be specific to their intended use that their intrinsic characteristics be described and that they be validated [ 38 , 40 , 41 , 137 ]. These specific characteristics must be respected to avoid two common issues [ 1 , 6 ]. The first issue is that of a poorly designed or constructed assessment tool. This tool can only give poor assessments because it will be unable to capture performance correctly and therefore to approach the skill to be assessed in a satisfactory way [ 56 ]. The second issue is related to poor or incomplete tool evaluation or inadequate tool selection. If the tool is poorly evaluated, its quality is unknown [ 56 ]. The scope of the assessment that is done with it is limited by the imprecision of the tool’s quality. If the tool is poorly selected, it will not accurately capture the performance being assessed. Again, summative assessment will be compromised. It is currently difficult to find tools that meet all the required quality and validation criteria [ 56 ]. On the one hand, this requires complex and rigorous work; on the other hand, the potential number of tools required is large. Thus, the overall volume of work to rigorously produce assessment tools is substantial. However, the literature provides the characteristics of validity (content, response process, internal structure, comparison with other variables, and consequences), and the process of developing qualitative and reliable assessment tools [ 38 – 41 , 45 ]. It therefore seems important to systematize the use of these guidelines for the selection, development, and validation of assessment tools [ 137 ]. Work in this area is needed and network collaboration could be a solution to move forward more quickly toward a bank of valid and validated assessment tools [ 39 ].

We had focused our discussion on the consequences of SBSA excluding the determining of the competencies and passing rates. Establishing and maintaining psychological safety is mandatory in simulation [ 138 ]. Considering the psychological and physiological consequences of SBSA is fundamental to control and limit negative impacts. Summative assessment has consequences for both the participants and the trainers [ 139 ]. These consequences are often ignored or underestimated. However, these consequences can have an impact on the conduct or results of the summative assessment. The consequences can be positive or negative. The “testing effect” can have a positive impact on long-term memory [ 139 ]. On the other hand, negative psychological (e.g., stress or post-traumatic stress disease), and physiological (e.g., sleep) consequences can occur or degrade a fragile state [ 139 , 140 ]. These negative consequences can lead to questioning the tools used and the assessments made. These consequences must therefore be logically considered when designing and conducting the SBSA. We believe that strategies to mitigate their impact must be put in place. The trainers and the participants must be aware of these difficulties to better anticipate them. It is a real duality for the trainer: he/she has to carry out the assessment in order to determine a mark and at the same time guarantee the psychological safety of the participants. It seems fundamental to us that trainers master all aspects of SBSA as well as the concept of the safe container [ 138 ] to maximize the chances of a good experience for all. We believe that ensuring a fluid pedagogical continuum, from training to (re)certification in both initial and continuing education using modern pedagogical techniques (e.g., mastery learning, rapid cycle deliberate practice) [ 141 – 144 ] could help maximize the psychological and physiological safety of participants.

The structure and use of scenarios in a summative setting are unique and therefore require specific development and skills [ 83 , 88 ]. SBSA scenarios differ from formative assessment scenarios by the different educational objectives that guide their development. Summative scenarios are designed to assess a skill through observation of performance, while formative scenarios are designed to learn and progress in mastering this same skill. Although there may be a continuum between the two, they remain distinct. SBSA scenarios must be predictable, programmable, standardizable, and reproductible [ 25 ] to ensure fairly assessed performances among participants. This is even more crucial when standardized patients are involved (e.g., OSCE) [ 119 , 145 ]. In this case, a specific script with expectations and training is needed for the standardized patient. The problem is that currently there are many formative scenarios but few summative scenarios. The rigor and expertise required to develop them is time-consuming and requires expert trainer resources. We believe that a goal should be to homogenize the scenarios, along with preparing the human resources who will implement them (trainers and standardized patients) and their application. We believe one solution would be to develop a methodology for converting formative scenarios into summative ones in order to create a structuring model for summative scenarios. This would reinvest the time and expertise already used for developing = formative scenarios.

Debriefing for simulation-based summative assessment

The place of debriefing in SBSA is currently undefined and raises important questions that need exploration [ 77 , 90 , 146 – 148 ]. Debriefing for formative assessment promotes knowledge retention and helps to anchor good behaviors while correcting less ideal ones [ 149 – 151 ]. In general, taking an exam promotes learning of the topic [ 139 , 152 ]. Formative assessment without a debriefing has been shown to be detrimental, so it is reasonable to assume that the same is true in summative assessment [ 91 ]. The ideal modalities for debriefing in SBSA are currently unknown [ 77 , 90 , 146 – 148 ]. Integrating debriefing into SBSA raises a number of organizational, pedagogical, cognitive, and ethical issues that need to be clarified. From an organizational perspective, we consider that debriefing is time and human resource-consuming. The extent of the organizational impact varies according to whether the feedback is automatized, standardized, personalized, and collective or individual. From an educational perspective, debriefing ensures pedagogical continuity and continued learning. We believe this notion is nuanced, depending on whether the debriefing is integrated into the summative assessment or instead follows the assessment while focusing on formative assessment elements. We believe that if the debriefing is part of the SBSA, it is no longer a “teaching moment.” This must be factored into the instructional strategy. How should the trainer prioritize debriefing points between those established in advance for the summative assessment and those that would emerge from any individuals’ performance? From a cognitive perspective, whether the debriefing is integrated into the summative assessment may alter the interactions between the trainer and the participants. We believe that if the debriefing is integrated into the SBSA, the participant will sometimes be faced with the cognitive dilemma of whether to express his/her “true” opinions or instead attempt to provide the expected answers. The trainer then becomes uncertain of what he/she is actually assessing. Finally, from an ethical perspective, in the case of a mediocre or substandard clinical performance, there is a question of how the trainer resolves discrepancies between observed behavior and what the participant reveals during the debriefing. What weight should be given to the simulation and to the debriefing for the final rating? We believe there is probably no single solution to how and when the debriefing is conducted during a summative assessment but rather promote the idea of adapting debriefing approaches (e.g., group or individualized debriefing) to various conditions (e.g., success or failure in the summative assessment). These questions need to be explored to provide answers as to how debriefing should be ideally conducted in SBSA. We believe a balance must be found that is ethically and pedagogically satisfactory, does not induce a cognitive dilemma for the trainer, and is practically manageable.

The skills and training of trainers required for SBSA are crucial and must be defined [ 136 , 153 ]. We consider that skills and training for SBSA closely mirror skills and training needed for formative assessment in simulation. This continuity is part of the pedagogical alignment. These different steps have common characteristics (e.g., rules in simulation, scenario flow) and specific ones (e.g., using assessment tools, validating competence). To ensure pedagogical continuity, the trainers who supervise these courses must be trained in and be masterful in simulation, adhering to pedagogical theories. We believe training for SBSA represents new skills and a potentially greater cognitive load for the trainers. It is necessary to provide solutions to both of these issues. For the new skills of trainers, we consider it necessary to adapt or complete the training of trainers by integrating knowledge and skills needed for properly conducting SBSA: good assessment practices, assessment bias mitigation, rater calibration, mastery of assessment tools, etc. [ 154 ]. To optimize the cognitive load induced by the tasks and challenges of SBSA, we suggest that it could be helpful to divide the tasks between the different trainers’ roles. We believe that conducting a SBSA therefore requires three types of trainers whose training is adapted to their specific role. First, three are the trainer-designers who are responsible for designing the assessment situation, selecting the assessment tool(s), training the trainer-rater(s), and supervising the SBSA sessions. Second, there should be the trainer-operators responsible for running the simulation conditions that support the assessment. Third, there are the trainer-raters who conduct the assessment using the assessment tool(s) selected by the trainer-designer(s) for which these trainer-raters have been specifically trained. The high-stake nature of SBSA requires a high level of rigor and professionalism from the three levels of trainers, which implies they have a working definition of the skills and the necessary training to be up to the task.

Implementing simulation-based summative assessment in healthcare

Implementing SBSA is delicate, requires rigor, respect for each step, and must be evidence-based. While OSCEs are simulation-based, simulation is not limited to OSCEs. Summative assessment with OSCEs has been used and studied for many years [ 12 , 13 ]. This literature is therefore a valuable source for learning lessons about summative assessment applied to simulation as a whole [ 22 , 85 , 155 ]. Knowledge from OSCE summative assessment needs to be supplemented so that simulation can perform summative assessment according to good evidence-based practices. Given the high stakes of SBSA, we believe it necessary to rigorously and methodically adapt what is already validated during implementation (e.g., scenarios, tools) and to proceed with caution for what has not yet been validated. As described above, many steps and prerequisites are necessary for optimal implementation, including (but not limited to) identifying objectives; identifying and validating assessment tools; preparing simulations scenarios, trainers, and raters; and planning a global strategy beginning from integrating the summative assessment in the curriculum to the managing the consequences of this assessment. SBSA must be conducted within a strict framework for its own sake and that of the people involved. Poor implementation would be detrimental to all participants, trainers, and the practice SBSA. This risk is greater for recertification than for certification [ 156 ], while initial training is able to accommodate SBSA easily because it is familiar (e.g., trainees engage in OSCEs at some point in their education), including SBSA in recertifying practicing professionals is not as obvious and may be context-dependent [ 157 ]. We understand that the consequences of failed recertification are potentially more impactful, both psychologically and for professional practice. We believe that solutions must be developed, tested, and validated that both fill gaps and preserve professionals and patients. Implementing SBSA therefore must be progressive, rigorous, and evidence-based to be accepted and successful [ 158 ].

Limitations

This work has some limitations that should be emphasized. First, this work covers only a limited number of issues related to SBSA. The entire topic is possibly not covered and we may not have explored other questions of interest. Nevertheless, the NGT methodology allowed this work to focus on those issues that were most relevant and challenging to the panel. Second, the literature review method (state-of-the-art literature reviews expanded with a snowball technique) does not guarantee exhaustiveness, and publications on the topic may have escaped the screening phase. However, it is likely that we have identified key articles focused on the questions explored. Potentially unidentified articles would therefore either not be important to the topic or would address questions not selected by the NGT. Third, this work was done by a French-speaking group, and a Francophone-specific approach to simulation, although not described to our knowledge, cannot be ruled out. This risk is reduced by the fact that the work is based on international literature from different specialties in different countries and that the panelists and reviewers were from different countries. Fourth, the analysis and discussion of the consequences of SBSA were focused on psychological consequences. This does not cover the full range of consequences including the impact on subsequent curricula or career pathways. Data in the literature exist on the subject and probably deserve a specific body of work. Despite these limitations, however, we believe this work is valuable because it raises questions and offers some leads toward solutions.

Conclusions

The use of SBSA is very promising with a growing demand for its application. Indeed, SBSA is a logical extension of simulation-based formative assessment and competency-based medical education development. It is probably wise to anticipate and plan for approaches to SBSA, as many important moving parts, questions, and consequences are emerging. Clearly identifying these elements and their interactions will aid in developing reliable, accurate, and reproducible models. All this requires a meticulous and rigorous approach to preparation commensurate with the challenges of certifying or recertifying the skills of healthcare professionals. We have explored the current knowledge on SBSA and have now shared an initial mapping of the topic. Among the seven topics investigate, we have identified (i) areas with robust evidence (what can be assessed with simulation?); (ii) areas with limited evidence that can be assisted by expert opinion and research (assessment tools, scenarios, and implementation); and (iii) areas with weak or emerging evidence requiring guidance by expert opinion and research (consequences, debriefing, and trainers) (Fig. 1 ). We modestly hope that this work can help reflection on SBSA for future investigations and can drive guideline development for SBSA.

Acknowledgements

The authors thank SoFraSimS Assessment with simulation group members: Anne Bellot, Isabelle Crublé, Guillaume Philippot, Thierry Vanderlinden, Sébastien Batrancourt, Claire Boithias-Guerot, Jean Bréaud, Philine de Vries, Louis Sibert, Thierry Sécheresse, Virginie Boulant, Louis Delamarre, Laurent Grillet, Marianne Jund, Christophe Mathurin, Jacques Berthod, Blaise Debien, and Olivier Gacia who have contributed to this work. The authors thank the external experts committee members: Guillaume Der Sahakian, Sylvain Boet, Denis Oriot and Jean-Michel Chabot; and the SoFraSimS executive Committee for their review and feedback.

Abbreviations

General data protection regulation

Nominal group technique

Objective structured clinical examination

Simulation-based summative assessment

Author’s contributions

CB helped with the study conception and design, data contribution, data analysis, data interpretation, writing, visualization, review, and editing. FL helped with the study conception and design, data contribution, data analysis, data interpretation, writing, review, and editing. RDM, JWR, and DB helped with the study writing, and review and editing. JWR and DB helped with the data interpretation, writing, and review and editing. LM, FJL, EG, ALP, OB, and ALS helped with the data contribution, data analysis, data interpretation, and review. The authors read and approved the final manuscript.

This work has been supported by the French Speaking Society for Simulation in Healthcare (SoFraSimS).

This work is a part of CB PhD which has been support by grants from the French Society for Anesthesiology and Intensive Care (SFAR), the Arthur Sachs-Harvard Foundation, the University Hospital of Caen, the North-West University Hospitals Group (G4), and the Charles Nicolle Foundation. Funding bodies did not have any role in the design of the study, collection, analysis, and interpretation of the data and in writing the manuscript.

Availability of data and materials

All data generated or analyzed during this study are included in this published article.

Declarations

Ethics approval and consent to participate.

Not applicable.

Consent for publication

Competing interests.

The authors declare that they have no competing interests.

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

- 1. van der Vleuten CPM, Schuwirth LWT. Assessment in the context of problem-based learning. Adv Health Sci Educ Theory Pract. 2019;24:903–914. doi: 10.1007/s10459-019-09909-1. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 2. Boulet JR. Summative assessment in medicine: the promise of simulation for high-stakes evaluation. Acad Emerg Med. 2008;15:1017–1024. doi: 10.1111/j.1553-2712.2008.00228.x. [ DOI ] [ PubMed ] [ Google Scholar ]

- 3. Green M, Tariq R, Green P. Improving patient safety through simulation training in anesthesiology: where are we? Anesthesiol Res Pract. 2016;2016:4237523. doi: 10.1155/2016/4237523. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 4. Krage R, Erwteman M. State-of-the-art usage of simulation in anesthesia: skills and teamwork. Curr Opin Anaesthesiol. 2015;28:727–734. doi: 10.1097/ACO.0000000000000257. [ DOI ] [ PubMed ] [ Google Scholar ]

- 5. Askew K, Manthey DE, Potisek NM, Hu Y, Goforth J, McDonough K, et al. Practical application of assessment principles in the development of an innovative clinical performance evaluation in the entrustable professional activity era. Med Sci Educ. 2020;30:499–504. doi: 10.1007/s40670-019-00841-y. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 6. Wass V, Van der Vleuten C, Shatzer J, Jones R. Assessment of clinical competence. Lancet. 2001;357:945–949. doi: 10.1016/S0140-6736(00)04221-5. [ DOI ] [ PubMed ] [ Google Scholar ]

- 7. Boulet JR, Murray D. Review article: assessment in anesthesiology education. Can J Anaesth. 2012;59:182–192. doi: 10.1007/s12630-011-9637-9. [ DOI ] [ PubMed ] [ Google Scholar ]

- 8. Bauer D, Lahner F-M, Schmitz FM, Guttormsen S, Huwendiek S. An overview of and approach to selecting appropriate patient representations in teaching and summative assessment in medical education. Swiss Med Wkly. 2020;150:w20382. doi: 10.4414/smw.2020.20382. [ DOI ] [ PubMed ] [ Google Scholar ]

- 9. Park CS. Simulation and quality improvement in anesthesiology. Anesthesiol Clin. 2011;29:13–28. doi: 10.1016/j.anclin.2010.11.010. [ DOI ] [ PubMed ] [ Google Scholar ]

- 10. Higham H, Baxendale B. To err is human: use of simulation to enhance training and patient safety in anaesthesia. British Journal of Anaesthesia [Internet]. 2017 [cited 2021 Sep 16];119:i106–14. Available from: https://www.sciencedirect.com/science/article/pii/S0007091217541215 . [ DOI ] [ PubMed ]

- 11. Mann S, Truelove AH, Beesley T, Howden S, Egan R. Resident perceptions of competency-based medical education. Can Med Educ J. 2020;11:e31–43. doi: 10.36834/cmej.67958. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 12. Khan KZ3, Ramachandran S, Gaunt K, Pushkar P. The objective structured clinical examination (OSCE): AMEE Guide No. 81. Part I: an historical and theoretical perspective. Med Teach. 2013;35(9):e1437–1446. doi: 10.3109/0142159X.2013.818634. [ DOI ] [ PubMed ] [ Google Scholar ]

- 13. Daniels VJ, Pugh D. Twelve tips for developing an OSCE that measures what you want. Med Teach. 2018;40:1208–1213. doi: 10.1080/0142159X.2017.1390214. [ DOI ] [ PubMed ] [ Google Scholar ]

- 14. Humphrey-Murto S, Varpio L, Gonsalves C, Wood TJ. Using consensus group methods such as Delphi and Nominal Group in medical education research. Med Teach. 2017;39:14–19. doi: 10.1080/0142159X.2017.1245856. [ DOI ] [ PubMed ] [ Google Scholar ]

- 15. Haute Autorité de Santé. Recommandations par consensus formalisé (RCF) [Internet]. Haute Autorité de Santé. 2011 [cited 2020 Oct 29]. Available from: https://www.has-sante.fr/jcms/c_272505/fr/recommandations-par-consensus-formalise-rcf .

- 16. Humphrey-Murto S, Varpio L, Wood TJ, Gonsalves C, Ufholz L-A, Mascioli K, et al. The use of the delphi and other consensus group methods in medical education research: a review. Academic Medicine [Internet]. 2017 [cited 2021 Jul 20];92:1491–8. Available from: https://journals.lww.com/academicmedicine/Fulltext/2017/10000/The_Use_of_the_Delphi_and_Other_Consensus_Group.38.aspx . [ DOI ] [ PubMed ]

- 17. Booth A, Sutton A, Papaioannou D. Systematic approaches to a successful literature review [Internet]. Second edition. Los Angeles: Sage; 2016. Available from: https://uk.sagepub.com/sites/default/files/upm-assets/78595_book_item_78595.pdf .

- 18. Morgan DL. Snowball Sampling. In: Given LM, editor. The Sage encyclopedia of qualitative research methods [Internet]. Los Angeles, Calif: Sage Publications; 2008. p. 815–6. Available from: http://www.yanchukvladimir.com/docs/Library/Sage%20Encyclopedia%20of%20Qualitative%20Research%20Methods-%202008.pdf .

- 19. ten Cate O, Scheele F. Competency-based postgraduate training: can we bridge the gap between theory and clinical practice? Acad Med. 2007;82:542–547. doi: 10.1097/ACM.0b013e31805559c7. [ DOI ] [ PubMed ] [ Google Scholar ]

- 20. Miller GE. The assessment of clinical skills/competence/performance. Acad Med. 1990;65:S63–67. doi: 10.1097/00001888-199009000-00045. [ DOI ] [ PubMed ] [ Google Scholar ]

- 21. Epstein RM. Assessment in medical education. N Engl J Med. 2007;356:387–396. doi: 10.1056/NEJMra054784. [ DOI ] [ PubMed ] [ Google Scholar ]

- 22. Boulet JR, Murray DJ. Simulation-based assessment in anesthesiology: requirements for practical implementation. Anesthesiology. 2010;112:1041–1052. doi: 10.1097/ALN.0b013e3181cea265. [ DOI ] [ PubMed ] [ Google Scholar ]

- 23. Bédard D, Béchard JP. L’innovation pédagogique dans le supérieur : un vaste chantier. Innover dans l’enseignement supérieur. Paris: Presses Universitaires de France; 2009. p. 29–43.

- 24. Biggs J. Enhancing teaching through constructive alignment. High Educ [Internet]. 1996 [cited 2020 Oct 25];32:347–64. Available from: 10.1007/BF00138871.

- 25. Wong AK. Full scale computer simulators in anesthesia training and evaluation. Can J Anaesth. 2004;51:455–464. doi: 10.1007/BF03018308. [ DOI ] [ PubMed ] [ Google Scholar ]